[ad_1]

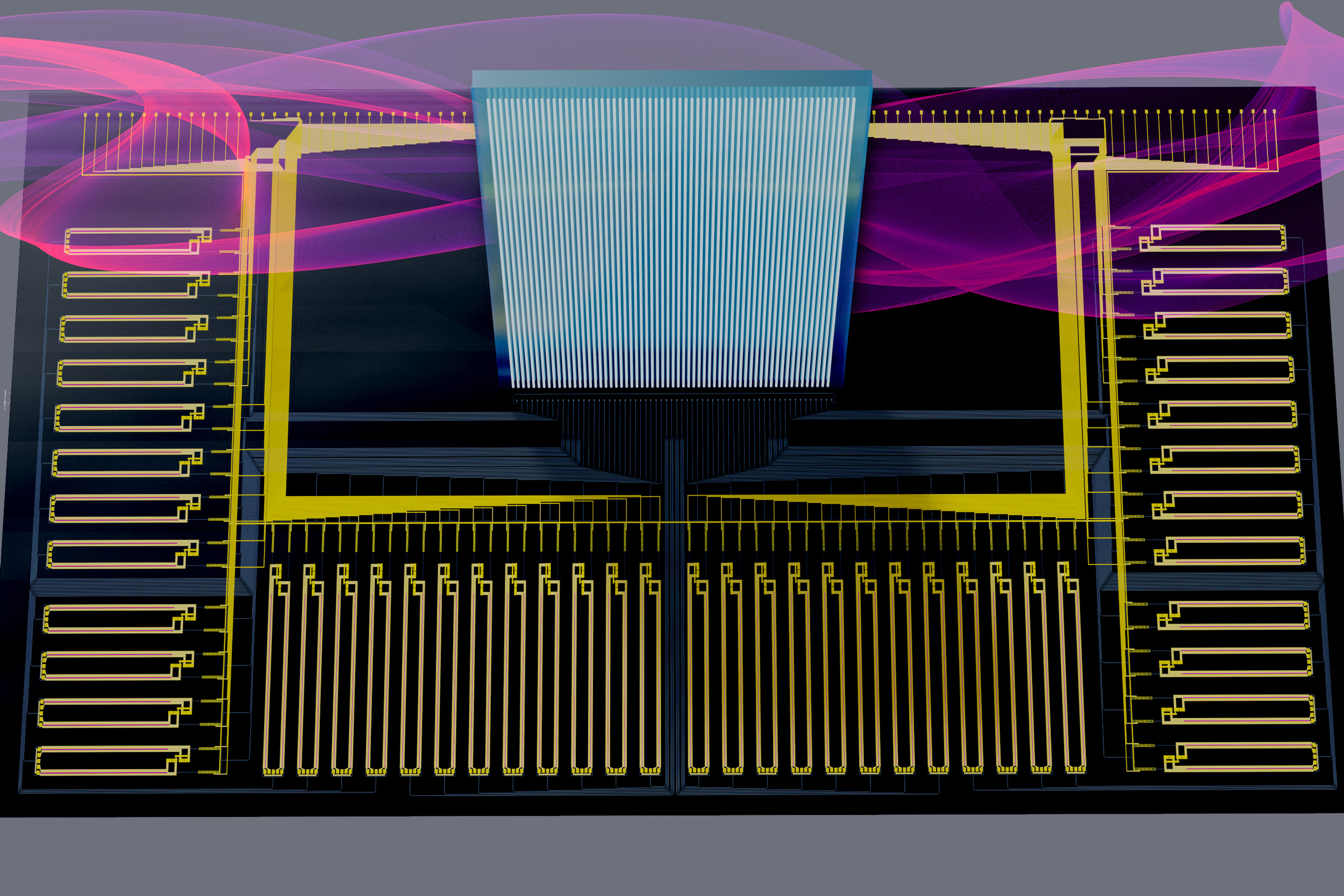

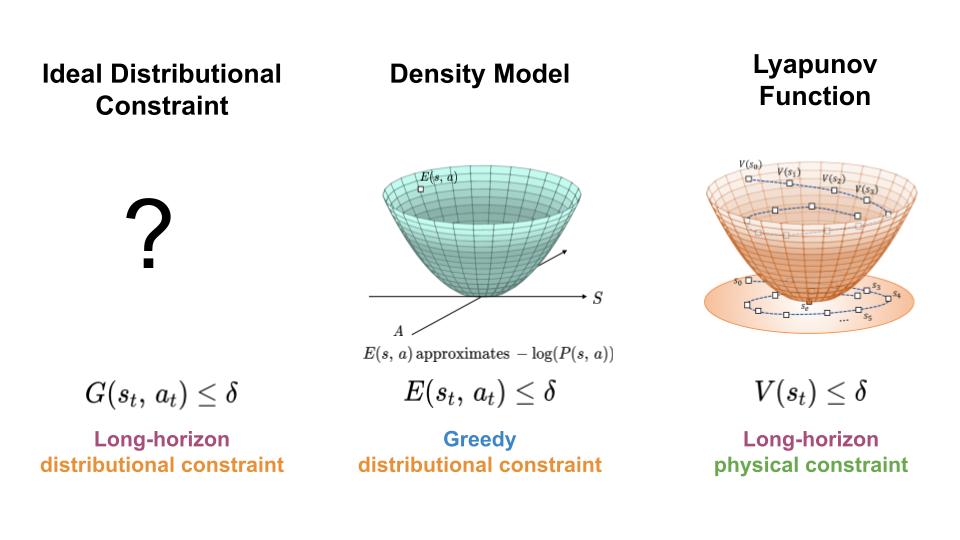

To manage the distribution shift expertise by learning-based controllers, we search a mechanism for constraining the agent to areas of excessive information density all through its trajectory (left). Right here, we current an method which achieves this aim by combining options of density fashions (center) and Lyapunov features (proper).

With a purpose to make use of machine studying and reinforcement studying in controlling actual world techniques, we should design algorithms which not solely obtain good efficiency, but in addition work together with the system in a protected and dependable method. Most prior work on safety-critical management focuses on sustaining the protection of the bodily system, e.g. avoiding falling over for legged robots, or colliding into obstacles for autonomous automobiles. Nonetheless, for learning-based controllers, there may be one other supply of security concern: as a result of machine studying fashions are solely optimized to output appropriate predictions on the coaching information, they’re susceptible to outputting inaccurate predictions when evaluated on out-of-distribution inputs. Thus, if an agent visits a state or takes an motion that may be very completely different from these within the coaching information, a learning-enabled controller could “exploit” the inaccuracies in its realized element and output actions which can be suboptimal and even harmful.

)